Why Metrics Validation is Important

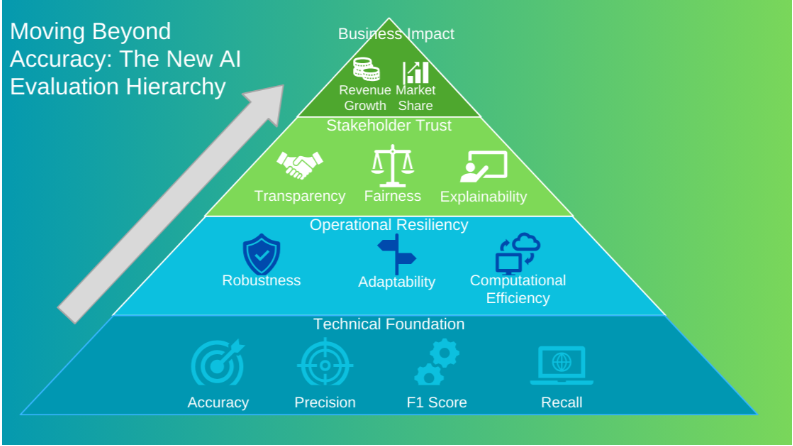

AI models are only as good as the metrics used to evaluate them. Validating the right metrics ensures that performance is measured accurately—not just on accuracy, but also on fairness, reliability, and real-world impact.

Key Metrics We Validate

- Accuracy and Precision

- Measure how well models predict correct outcomes.

- Validate against benchmark datasets for consistency.

- Recall and F1 Score

- Ensure models capture true positives effectively.

- Balance precision and recall for robust evaluation.

- Fairness Metrics

- Check for demographic parity and equal opportunity.

- Validate that models perform fairly across different groups.

- Robustness and Reliability

- Test models under varied and real-world scenarios.

- Validate stability against noisy or adversarial inputs.

- Explainability Metrics

- Assess transparency and interpretability of AI decisions.

- Ensure outputs can be traced back and explained clearly.

Our Role

We go beyond standard accuracy checks—our metrics validation framework ensures AI systems are measured on fairness, trustworthiness, and real-world readiness.